By A Mystery Man Writer

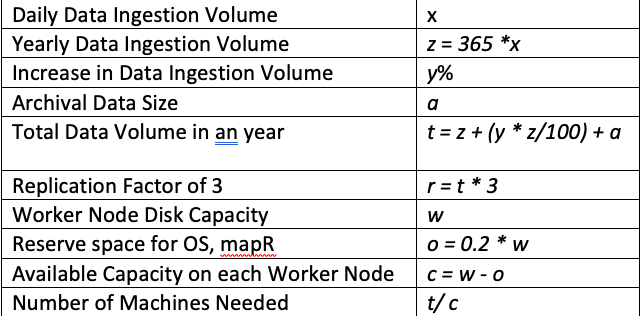

What should be the number of worker nodes in your cluster? What should be the configuration of each worker node? All this depends on the amount of data you would be processing. In this post I will…

Breaking the bank on Azure: what Apache Spark tool is the most cost-effective?, Intercept

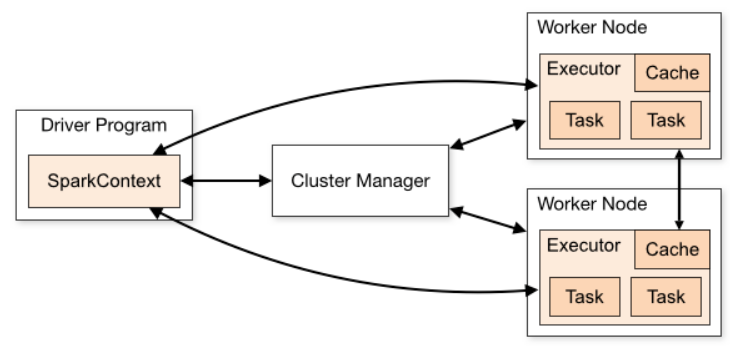

Explain Resource Allocation configurations for Spark application

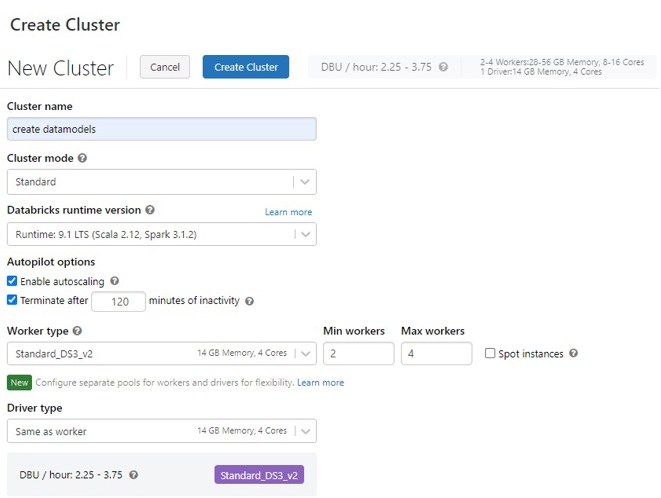

Azure Databricks - Cluster Capacity Planning

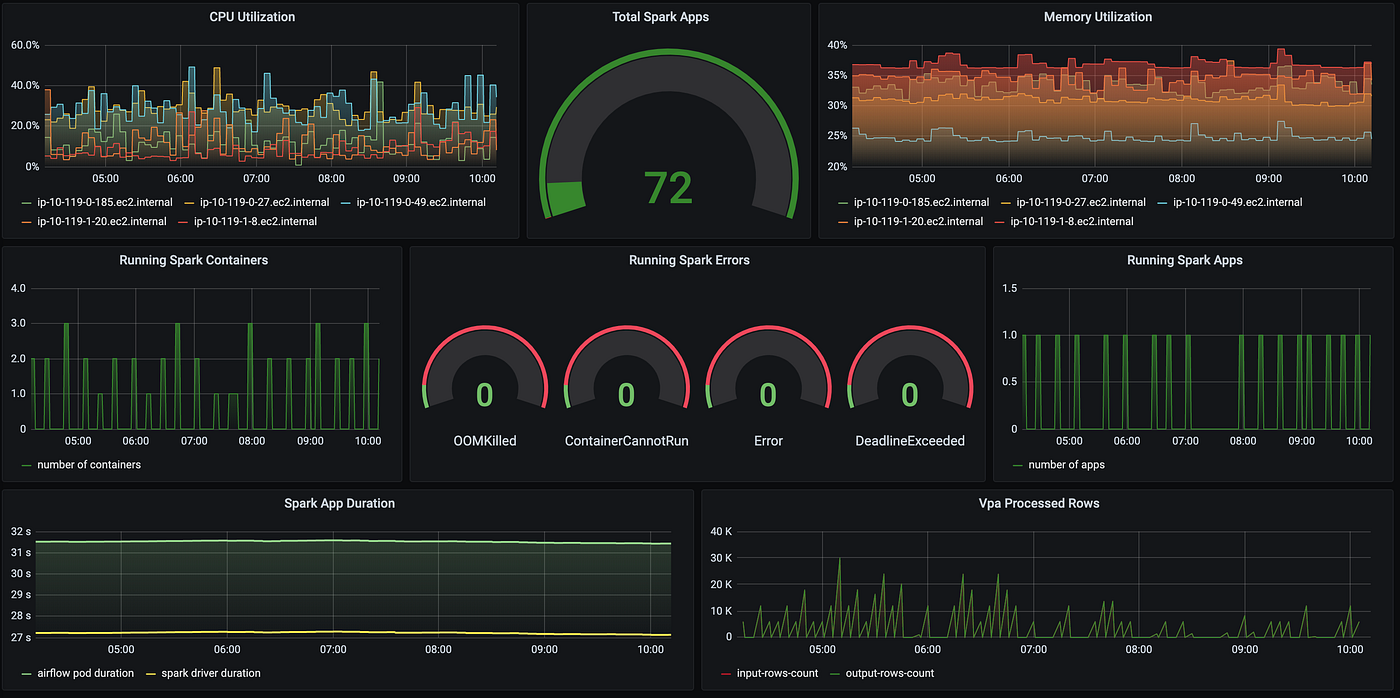

Migrating Apache Spark workloads from AWS EMR to Kubernetes, by Dima Statz

Apache Spark 101: Dynamic Allocation, spark-submit Command and Cluster Management, by Shanoj

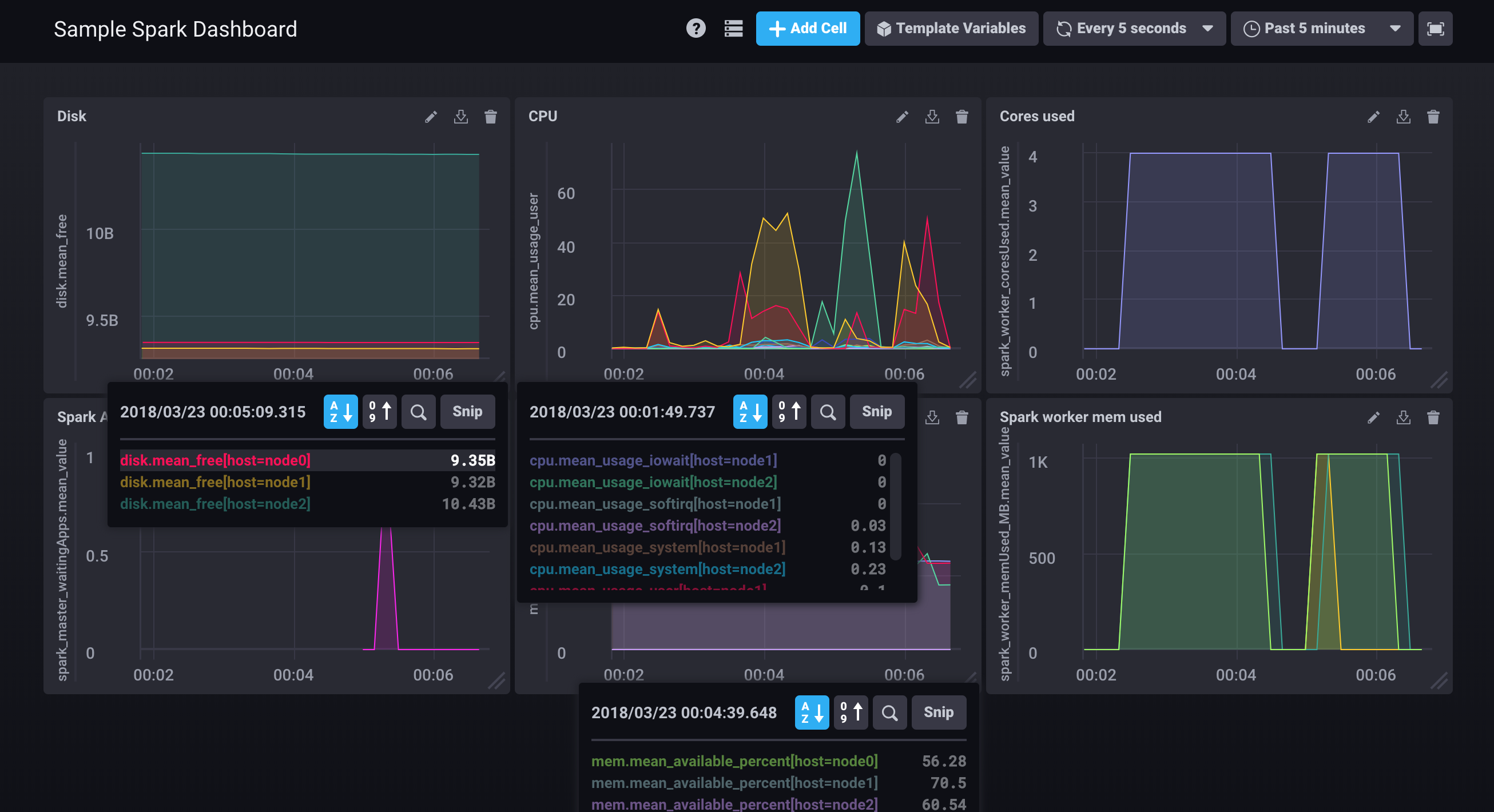

spark-cluster/README.md at master · cloudurable/spark-cluster · GitHub

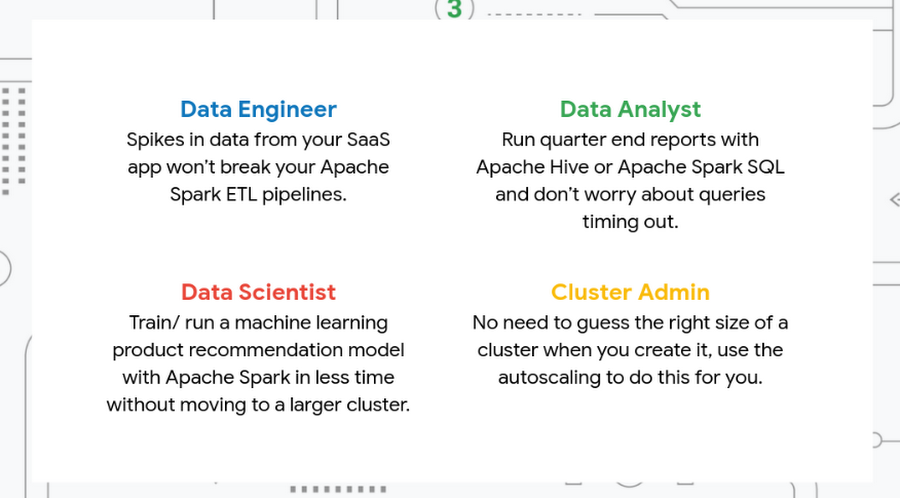

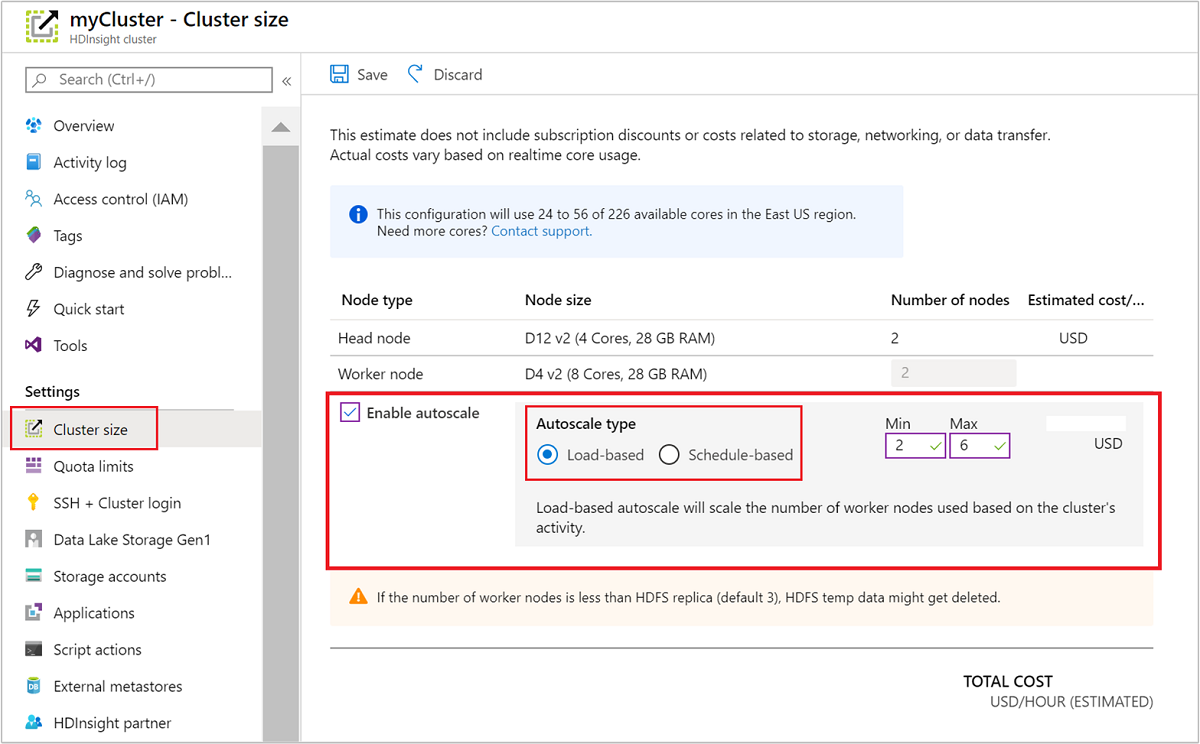

Autoscaling capabilities for Hadoop and Spark clusters

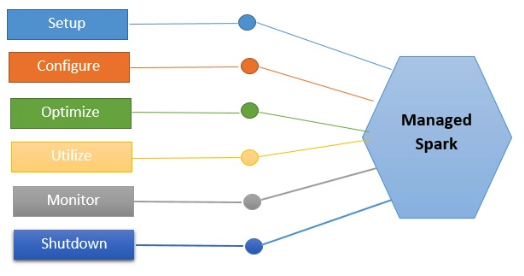

What is Managed Spark?

PySpark — The Cluster Configuration, by Subham Khandelwal

Calculating executor memory, number of Executors & Cores per executor for a Spark Application - Mycloudplace

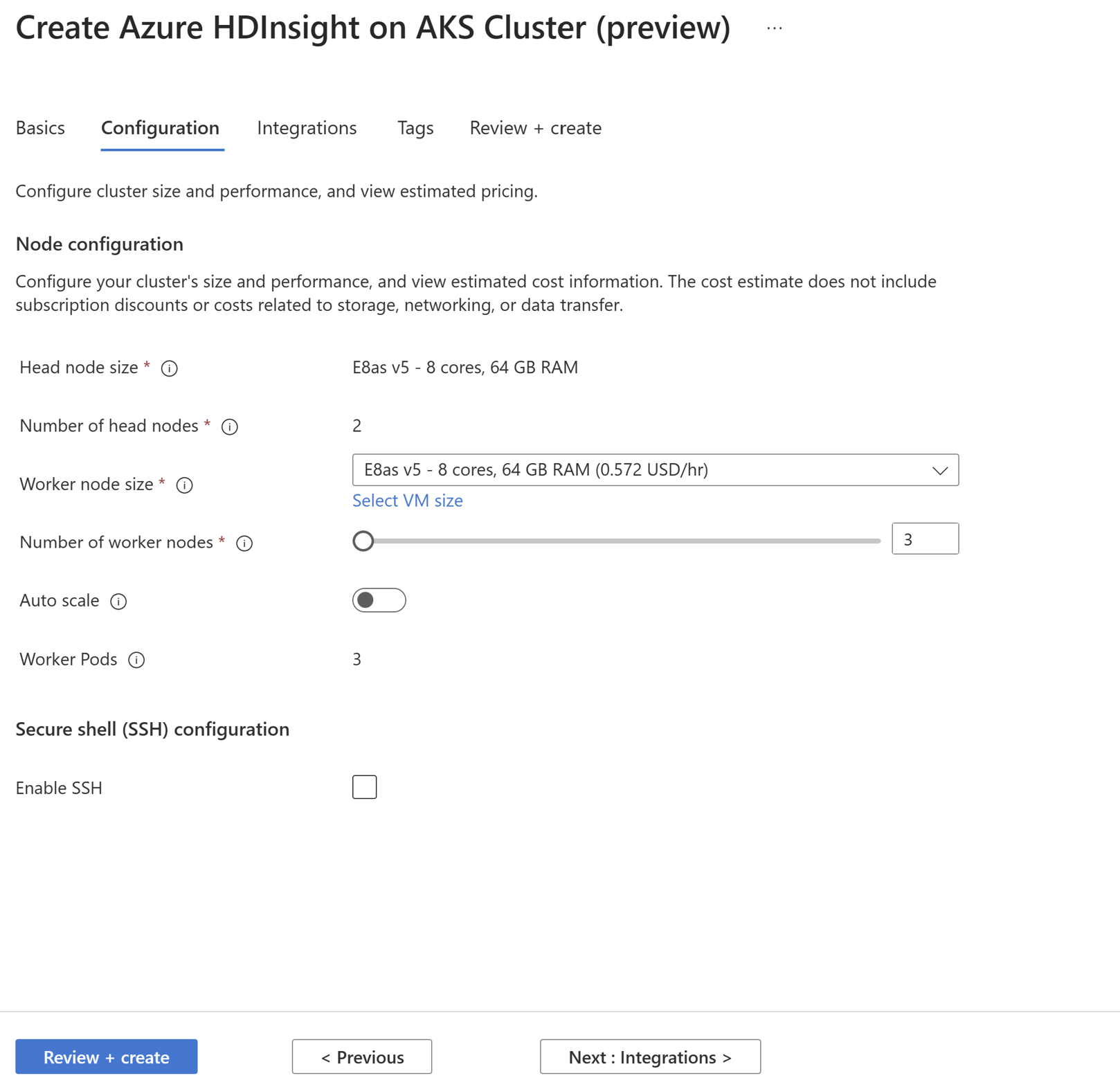

Create cluster pool and cluster - Azure HDInsight on AKS

How would I decide/create a Spark cluster infrastructure given the size and frequency of data that I get? - Quora

Automatically scale Azure HDInsight clusters